”THE COLUMBIA ANALYTIC PROCESS SCALE: INTERRATER RELIABILITY AND CONSTRUCT VALIDITY”,

by Susan Vaughan, Robert Spitzer, Mark Davies and Steven Roose.

Discussion by Sherwood Waldron Jr. at the American Psychoanalytic Association Research in Progress Meeting, New York, December 20, 1997

First I would like to review that there are three components in assessing the presence of psychoanalytic process in this ingenious and forward-looking study : the presence of free association, interpretation and working through. And the reliability of each of these measures contributes vitally to the reliability of the overall assessment. The assessment of Interpretation and of Working Through are two sides of the analytic process, namely what the analyst contributes is assessed, to some degree by assessing interpretations, and what the patient is accomplishing is assessed by Working Through. It makes sense to assess the two parties to the process. What about the assessment of Free Association itself? The item of free association serves as an initial screen, so to say, in that its absence (for that portion of the session rated) would show the patient’s part of the work was not customarily analytic. I believe there must be a couple of reasons why free association was included: first this is historically a distinctive aspect of the way the patient is supposed to work in analysis; second there is a measure available for its assessment which has some demonstrated reliability. I am not sure that placing free association as one of the three legs which determine whether psychoanalytic process is taking place may be giving it more prominence than it might deserve. I believe that the instrument is more clearly explained by emphasizing the assessment of the patient’s contribution and that of the analyst. I come to this idea because my research group has developed what we call the Analytic Process Scales over the past thirteen years, and we actually have two scales, one for the contribution of the analyst and the other of the patient, which we find work very well as complementary assessments, and the CAPS has this same two-fold focus in a way that makes a lot of sense. The specifics of both what is assessed in the analyst and in the patient cover comparable ground to our dozen variables for analyst and patient.

For purposes of this discussion, I first will address the assessment of the analyst’s contribution to the process. The focus in the CAPS is on measuring interpretation, which is one aspect of we do in a study group I lead in New York called the Analytic Process Scales Group, whose directors are Bob Scharf, Steve Firestein and Anna Burton. We have been successful in obtaining reliable ratings of interpretation after spending several years studying instances when there were significant disagreements among our analyst raters. The way this measure is designed in this study: interpretation is sharply differentiated from clarification, following the work of Piper on psychotherapy cases. However, in our study of analyses we found that in the sessions with better or higher quality analytic process, clarifications were highly interwoven with interpretations, and in fact, based upon our findings, we hypothesize that interpretations with a large clarifying component are more effective. Also, our group has found that the distinction between interpretation and clarification is the one our senior analysts have the most disagreements about. So this sharp differentiation in your study probably lessens the agreement between judges in a way that could be avoided, by including clarification as an important activity of analysts related to interpretation. We found the distinction between them depends on the degree to which the rater estimates that what is drawn to the patient’s attention is something the patient was previously aware of, or a connection between two elements the patient was previously aware of, a distinction which is inherently quite inferential and difficult to make even if the patient were one’s own patient. I would like also to note that the CAPS measure includes an assessment of whether the analyst approaches transference matters, resistance, and genetic aspects as well as an approach to fantasy. Thus the assessment of interpretations includes several central psychoanalytic components, the very same ones which we evaluate in our instrument, with the exception of the approach to fantasy. Our comparable variable is somewhat different, namely the approach to conflict itself. So, judging by the range of variables we have developed over many years, the CAPS comes out looking very good. The variables it doesn’t assess which we think important include the non-interpretive aspects of the work, including encouraging elaboration and other aspects of analytic interventions which support the treatment process and/or the patient without being interpretative, and the quality of the intervention including how well the analyst follows the patient. But what is being assessed is sufficiently important and central that I believe the CAPS measure is very suitable for its purpose, in regard to measuring the functioning of the analyst with this patient.

Now turning to to the patient’s contribution to the process, measured by what the authors place under the category of Working Through: we may first ask what is being worked through. Here we need to think of conflict and defense, and consider that as an individual works through their previous ways of dealing with painful affect, and their ensuing maladaptive defenses, they develop more effectively functioning defenses which lead to more adaptive resolutions of conflict. In terms of what could be seen by studying analytic sessions, the concept implies first that the individual is moving in the direction of processing consciously their previously less conscious conflicts. In other words they are becoming more familiar with them than they were before. If this definition is acceptable, how would we go about actually measuring such a a complex and highly inferential process? The only empirical study which I know of in which there is a more direct assessment of working through in a more formal analytic sense is the study of mental contents previously warded off which emerged in the analysis(1)

of the patient known as “Mrs. C.” The study, by Len Horowitz and others, is a brilliant one, but it underscores the difficulty in regard to a content-based estimation of working through: it is not practical to base a research application of the concept on direct measurement of changes in content. A similar approach would be to study the levels of defenses being utilized, with the defense assessment instrument of Chris Perry. This would provide a valid method of change in the process, directly measuring working through. But it would require a before-and-after sample, as would the Horowitz approach, and would be very labor-intensive. Herein lies the great value of the approach of our present authors: it permits a simplification and approximation of this aspect of the analytic process, by using methods of assessment which should indirectly reflect a an analytic process with a component of working through. It is interesting that the authors have taken this approach, in the light of Vaughan’s recent book, “The Talking Cure”, because the assessment rests on how much the patient appears to be working over the connections between affects, thoughts or behaviors and aspects of the self, of fantasies, of transference reactions, and/or of genetic history. Thus the notion of working through reflected in this research has an important conceptual connection even to the neurophysiological basis of what leads to changes from psychotherapy and psychoanalysis. The four areas assessed, the self, fantasies, transference reactions, and/or genetic history, all are central areas in which conflict is experienced. And these areas are well chosen, in that they constitute four of the elements frequently mentioned by their expert panel of analysts as indicators of analytic process.

Of course, it may turn out this is an additional step in the assessment of specifying what areas are being reflected about may not be necessary, in that perhaps in psychotherapy and psychoanalysis virtually all reflections making connections between one subject and another would fall into one of these categories. This would be interesting to check in the data. And another problem not yet worked out in this categorization is that a component of working through is called “observations about Self”, and this is intended to be in the same In any case the authors are to be congratulated for this ingenious and thoughtful approach to a psychoanalytic concept which is very difficult to operationalize.

To be considered analytic process there must also be at least some interpretive work by the analyst and self reflections in regard to conflictual matters by the patient. The authors have had as an objective to be able to dichotomize a patient population into those showing some clear-cut analytic process versus those where it is not present, because research has demonstrated that if patients attain an insightful process together with the analyst, the patient’s outcome is almost always favorable, whereas those patients who do not show such a process may or may not do well. I feel the authors are to be praised for their ambition to study further this crucial difference in outcomes of what were intended (by the analyst) as psychoanalytic procedures. At the same time, I urge them to view any dichotomization of cases as the final step in a journey, the initial steps of which deserve much more exploration. My study group is just beginning an examination of the way the patient’s flow of material and self reflection, both in regard to the analyst or analytic situation and in regard to other aspects of the patient’s life, fluctuate throughout sessions and from one session to the next, even at the same period of the analytic work. The overlap with the CAPS measurements of free association and self reflection in regard to the self, fantasies, transference reactions and genetic history is most intriguing, and I would hope that in a few years both groups will have been able to outline in a systematic fashion how much variation there is at any given phase of an analysis, and study any cyclical aspects to ordinary analytic work as well. Then we will all be in a much better position to determine what size sample one needs to estimate the quality of an analytic relationship, both from the vantage point of what the patient contributes, and from the point of view of analyst’s work as well. Then cases can be more meaningfully divided as to whether and to what degree an analytic process is taking place. And there can be a systematic exploration of what may be typical cyclical aspects of psychoanalytic work, written up first by Herbert Schlesinger in the ’70s, and which may apply both to how analyst and patient function. We have seen some evidence for encouraging elaboration, for instance, as something taking place out of phase with interpreting, so to say. Such cycles would affect sample size in that, in the CAPS assessment, one key goal is to find out how well the treatment is proceeding at its best, and one needs a sufficient size sample for scores not to reflect only a period of resistance which may follow and be followed by a major breakthrough.

I want to leave my remarks short enough to provide ample opportunity for discussion from the floor, and there are other aspects of the study which I would be interested in commenting on, but there are two points I wish to make now in concluding. First, I believe the authors are overly concerned about the lack of agreement among the senior authors. We found in our study that the same phenomenon occurred if analysts rated on the basis of one session only. Once we realized that we as raters needed to get to know the case well first, so that we weren’t simply imposing our prejudices upon a sea of ignorance, we rated sessions only after having listened and read two or three sessions immediately prior to the one to be rated. This way, we found, we all came from our differing initial preconceptions and tended to settle down to a shared view. So the authors’ anticipation that there really is a shared understanding among analysts has in fact been verified. In fact we have been successful in obtaining reliable ratings agreeing upon the quality of the analytic process rated, separately from the point of view of the patient’s contribution, and that of the analyst. This will undoubtedly turn out to be highly correlated with the authors’ assessment of the presence of analytic process, and I look forward to the time when we may compare our scores on the same material. If there is indeed an operationally definable quality of analytic process, and we have shown it in our small preliminary sample, then experienced analyst raters really have an idea of analytic process that has sufficient elements in common to be meaningful!

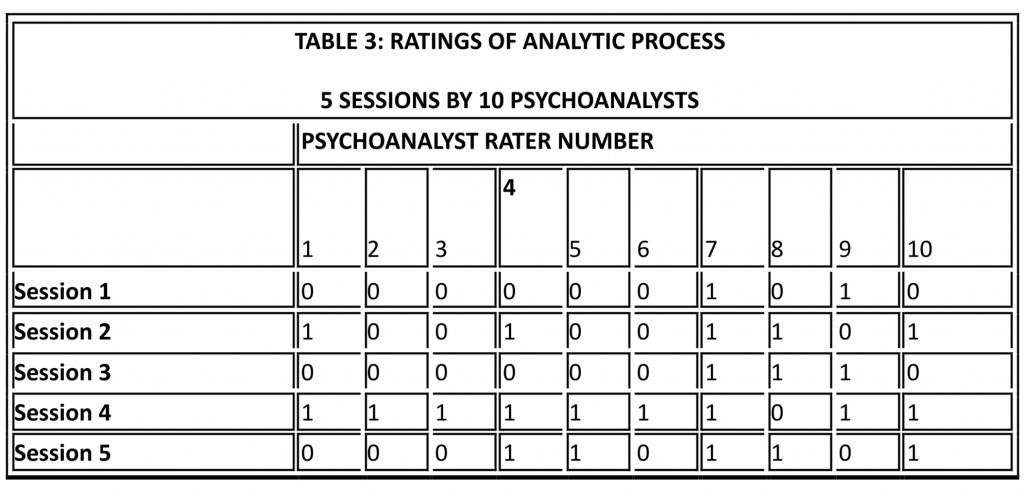

The second point is a related one: the disagreements between the ten senior analysts were not, in my opinion, as bad as the authors feared. Without any shared or agreed upon definition, and without having gotten oriented to the case by studying several introductory sessions, as I just described, there was actually a significant degree of agreement between them. In order to demonstrate this, I ran the correlations between their scores on the five sessions they rated. Here is the raw data, and as you can see, there is indeed a great deal of variability in the scores. However, I have marked three columns showing the scores of three analysts, who completely agreed as to the presence or absence of analytic process in these five sessions. And, as you can see, 26 of the 36 correlations between raters are positive, and only 10 negative, which again shows a positive association. So, if the authors and the rating analysts did as well as this under what our group has found to be exceptionally adverse circumstances, there is great cause for encouragement, and reason to pursue this highly worthwhile study. For example, it makes sense to find out whether the analysts who agree would, in talking together, identify similar issues in the material, and then, if they were willing, they could rate some additional hours of the same patients, if available, to study the stability of the ratings from session to session.

So I suggest to the authors that they attempt to rate some of the same hours that other groups are studying, or that other groups rate their hours, and compare the values on the three components of the analytic process measure with relevant scales from other measures, such as our own, and scores on the psychotherapy process Q-set of Jones. A related question is that of the size of the sample needed to obtain a reasonably stable and representative assessment. I made a preliminary investigation of some of our data in this respect, and found that, in the small sample we have available so far, there is considerable variability in the presence of the various components making up the process, and I am sure that such a study will demonstrate the fluctuating nature of all the scores, which I hope and believe will vary together, increasing the construct validity of each of them. The authors divided each session into thirds, so as to keep the amount of material to be kept simultaneously in mind to a manageable size. This accords with our experience, where we divide sessions into segments which constitute meaningful units. Such an approach could be tried using the CAPS as well, but in any case, the attention to what size segment leads to reliable ratings is characteristic of the care with which this study has been carried out. Since our study planned for the next two years is intended to answer precisely this question, there can be considerable benefit to collaboration!

Some of my suggestions for the CAPS have been predicated on obtaining further cooperation from senior analysts to carry out the ratings. I know how difficult that can be. In this study ten seasoned analysts actually read through and rated five sessions, which gives testimony to the persuasive power of this research group. However, it may be hard to obtain more effort from them, and in this respect the study highlights the great need for more tangible financial and institutional support for psychoanalytic research. Offering to have those analysts meet together to discuss the materials could have a similar function to that of a retreat, namely to forge bonds that will stimulate further willingness to invest time and effort in the enterprise. I would recommend this as a goal, along with presenting the findings to date including those from the ten analysts in the positive light which I feel they very much deserve.

(1 = Present, 0 = Absent)

R E L I A B I L I T Y A N A L Y S I S – S C A L E (A L P H A)

* * * ANAL7 has zero variance *

Correlation Matrix

ANAL1 ANAL2 ANAL3 ANAL4 ANAL5

ANAL1 1.0000

ANAL2 .6124 1.0000

ANAL3 .6124 1.0000 1.0000

ANAL4 .6667 .4082 .4082 1.0000

ANAL5 .1667 .6124 .6124 .6667 1.0000

ANAL6 .6124 1.0000 1.0000 .4082 .6124

ANAL8 -.1667 -.6124 -.6124 .1667 -.1667

ANAL9 -.1667 .4082 .4082 -.6667 -.1667

ANAL10 .6667 .4082 .4082 1.0000 .6667

ANAL6 ANAL8 ANAL9 ANAL10

ANAL6 1.0000

ANAL8 -.6124 1.0000

ANAL9 .4082 -.6667 1.0000

ANAL10 .4082 .1667 -.6667 1.0000

Item-total Statistics

Scale Scale Corrected

Mean Variance Item- Alpha

if Item if Item Total if Item

Deleted Deleted Correlation Deleted

ANAL1 3.4000 5.3000 .6344 .6900

ANAL2 3.6000 5.3000 .8256 .6685

ANAL3 3.6000 5.3000 .8256 .6685

ANAL4 3.2000 5.2000 .6805 .6813

ANAL5 3.4000 5.3000 .6344 .6900

ANAL6 3.6000 5.3000 .8256 .6685

ANAL8 3.2000 8.2000 -.4144 .8502

ANAL9 3.2000 7.7000 -.2632 .8312

ANAL10 3.2000 5.2000 .6805 .6813

Reliability Coefficients 9 items

Alpha = .7500 Standardized item alpha = .7761

- Horowitz, L., Sampson, H., Siegelman, E., Wolfson, A., & Weiss, J. (1975). On the identification of warded-off mental contents: an empirical and methodological contribution. J. Abnormal Psychol., 84:545-558.